Tech community party in Boston

Harvard Free Culture, ROFLCon, and Public Radio Exchange Proudly Present…

INFORMATION SUPERHIGHWAY TWO: THE SECRET HEADQUARTERS EDITION

A Gathering Of Boston TechNovember 29th, 2008, 8:00 – 12:00

Berkman Squared, 50 Church Street, Cambridge MA

RSVP on Upcoming: http://upcoming.yahoo.com/event/1369339/Boston is full of cool Internet people. Why aren’t they meeting each other?

INFORMATION SUPERHIGHWAY is Boston’s monthly party gathering hackers, activists, artists, designers, nonprofits, startups, academics and general geekery to hang out and connect with one another.

*No agenda, no “networking,” no presentations. Just beverages, food, ideas and cool people.

*Best of all the price is free, just like your courtesy black helicopter flight to A Secure Undisclosed Location*This time: come out and meet Boston’s Secret Masters of Hidden Hackspace, Homebrew Mad Science, and Cyber Revolution

*Also: hear about our scheme to rent a decommissioned missile silo. And how you can too, on less than $10 bucks a month. (No, seriously).With Featured Guests and Organizations:

*Jason Bobe, (DIYBio)

*Meredith Garniss and Andrew Sempere,(Willougby and Baltic)

*Alex Hornstein, (NUBLabs) (FabLab)

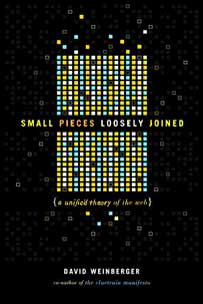

*David Weinberger, (Joho The Blog) (The Berkman Center For Internet and Society)

*Jake Shapiro, (The Public Radio Exchange)

*Jason Scott (Textfiles)

*Matt Lee (The Free Software Foundation)

Categories: Uncategorized dw