October 9, 2024

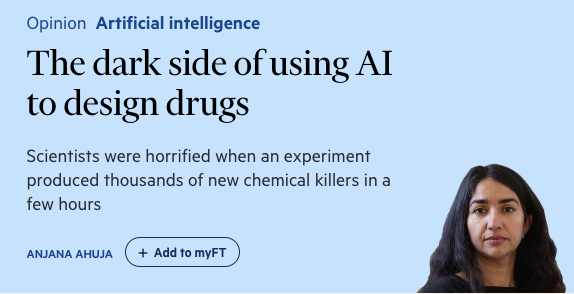

The article notes that the prize actually went to two humans, but this headline from MIT Tech Review may just be ahead of its time. Are we one generation of tech away from a Nobel Prize going to a machine itself — assuming the next gen is more autonomous in terms of what it applies itself to?