September 26, 2017

Speaker info

[lliveblog][PAIR] Antonio Torralba on machine vision, human vision

At the PAIR Symposium, Antonio Torralba asks why image identification has traditionally gone so wrong.

|

NOTE: Live-blogging. Getting things wrong. Missing points. Omitting key information. Introducing artificial choppiness. Over-emphasizing small matters. Paraphrasing badly. Not running a spellpchecker. Mangling other people’s ideas and words. You are warned, people. |

If we train our data on Google Images of bedrooms, we’re training on idealized photos, not real world. It’s a biased set. Likewise for mugs, where the handles in images are almost all on the right side, not the left.

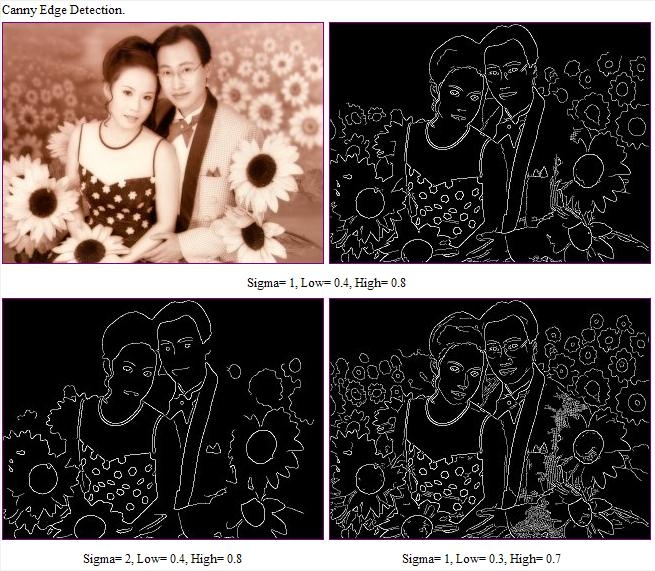

Another issue: The CANNY edge detector (for example) detects edges and throws a black and white reduction to the next level. “All the information is gone!” he says, showing that a messy set of white lines on black is in fact an image of a palace. [Maybe the White House?] (A different example of edge detection:)

/div>

/div>

Deep neural networks work well, and can be trained to recognize places in images, e.g., beach. hotel room, street. You train your neural net and it becomes a black box. E.g., how can it recognize that a bedroom is in fact a hotel room? Maybe it’s the lamp? But you trained it to recognize places, not objects. It works but we don’t know how.

When training a system on place detection, we found some units in some layers were in fact doing object detection. It was finding the lamps. Another unit was detecting cars, another detected roads. This lets us interpret the neural networks’ work. In this case, you could put names to more than half of the units.

How to quantify this? How is the representation being built? For this: Network dissection. This shows that when training a network on places, objects emerges. “The network may be doing something more interesting than your task.”The network may be doing something more interesting than your task: object detection is harder than place detection.

We currently train systems by gathering labeled data. But small children learn without labels. Children are self-supervised systems. So, take in the rgb values of frames of a movie, and have the system predict the sounds. When you train a system this way, it kind of works. If you want to predict the ambient sounds of a scene, you have to be able to recognize the objects, e.g., the sound of a car. To solve this, the network has to do object detection. That’s what they found when they looked into the system. It was doing face detection without having been trained to do that. It also detects baby faces, which make a different type of sound. It detects waves. All through self-supervision.

Other examples: On the basis of one segment, predict the next in the sequence. Colorize images. Fill in an empty part of an image. These systems work, and do so by detecting objects without having been trained to do so.

Conclusions: 1. Neural networks build represntations that are sometimes interpretatble. 2. The rep might solve a task that’s evem ore interesting than the primary task. 3. Understanding how these reps are built might allow new approaches for unsupervised or self-supervised training.

[liveblog][PAIR] Maya Gupta on controlling machine learning

At the PAIR symposium. Maya Gupta runs Glass Box at Google, which looks at black box issues. She is talking about how we can control machine learning to do what we want

|

NOTE: Live-blogging. Getting things wrong. Missing points. Omitting key information. Introducing artificial choppiness. Over-emphasizing small matters. Paraphrasing badly. Not running a spellpchecker. Mangling other people’s ideas and words. You are warned, people. |

The core idea of machine learning are its role models, i.e., its training data. That’s the best way to control machine learning. She’s going to address by looking at the goals of controlling machine learning.

A simple example of monotinicity. Let’s say we’re tring to recommend nearby coffee shops. So we use data about the happiness of customers and distance from the shop. We can fit the model ot a linear model. Or we can fit it to a curve, which works better for nearby shops but goes wrong for distant shops. That’s fine for Tokyo but terrible for Montana because it’ll be sending people many miles away. A montonic example says we don’t want to do that. This controls ML to make it more useful. Conclusion: the best ML has the right examples and the right kinds of flexibility. [Hard to blog this without her graphics. Sorry.] See “Deep Lattice Networks for Learning Partial Monotonic Models,” NIPS 2017; it will soon by on the TensorFlow site.

“The best way to do things for practitioners is to work next to them”The best way to do things for practitioners is to work next to them.

A fairness goal: e.g., we want to make sure that accuracy in India is the same as accuracy in the US. So, add a constraint that says what accuracy levels we want. Math lets us do that.

Another fairness goal: the rate of positive classifications should be the same in India as in the US, e.g., rate of students being accepted to a college. In one example, there is an accuracy trade-off in order to get fairness. Her attitude: Just tell us what you want and we’ll do it

Fairness isn’t always relative. E.g., E.g., minimize classification errors differently for different regions. You can’t always get what you want, but you sometimes can or can get close. [paraphrase!] See fatml.org

It can be hard to state what we want, but we can look at examples. E.g., someone hand-labels 100 examples. That’s not enough as training date, but we can train the system so that it classifies those 100 at something like 95% accuracy.

Sometimes you want to improve an existing ML system. You don’t want to retrain because you like the old results. So, you can add in a constraint such as keep the differences from the original classifications to less than 2%.

You can put all of the above together. See “Satisfying Real-World Goals with Dataset Constraints,” NIPS, 2016. Look for tools coming to TensorFlow.

Some caveats about this approach.

First, to get results that are the same for men and women, the data needs to come with labels. But sometimes there are privacy issues about that. “Can we make these fairness goals work without labels? ”Can we make these fairness goals work without labels? Research so far says the answer is messy. E.g., if we make ML more fair for gender (because you have gender labels), it may also make it fairer for race.

Second, this approach relies on categories, but individuals don’t always fit into categories. But, usually if you get things right on categories, it usually works out well in the blended examples.

Maya is an optimist about ML. “But we need more work on the steering wheel.” We’re not always sure we want to go with this technology. And we need more human-usable controls.

[liveblog][PAIR] Hae Won Park on living with AI

At the PAIR conference, Hae Won Park of the MIT Media Lab is talking abiut personal social robots for home.

|

NOTE: Live-blogging. Getting things wrong. Missing points. Omitting key information. Introducing artificial choppiness. Over-emphasizing small matters. Paraphrasing badly. Not running a spellpchecker. Mangling other people’s ideas and words. You are warned, people. |

Home robots no longer look like R2D2. She shows a 2014 Jibo video.

What sold people is the social dynamic: Jibo is engaged with family members.

She wants to talk about the effect of AI on people’s lives at home.

For example, Google Home changed her morning routine, how she purchases goods, and how she controls her home environment. She shows a 2008 robot called Autom, a weight management coach.

A studied showed that the robot kept people at it longer than using paper or a computer program, and people had the strongest “working alliance” with the robot. They also had emotional engagement with it, personalizing it, giving them names, etc. These users understand it’s just a machine. Why?

She shows a video of Leonardo, a social robot that exhibits bodily cues of emotion. We seem to share a mental model.

They studied how children tell stories and listen to each other. Jin Joo Lee developed a model in which the robot appears to be very attentive as the child tells a story. It notes cues about whether the speaker is engaged. The children were indeed engaged by this reactive behavior.

Researchers have found that social robots activate social thinking, lighting up the social thinking part of the brain. Social modeling occurs between humans and robots too.

Working with children aged 4-6, they studied “growth mindset”: the belief that you can get better if you try hard. Parents and teachers have been shown to affect this. They created a growth mindset robot that plays a game with the child. The robot encourages the child at times determined by a “Boltzmann Machine”en.wikipedia.org/wiki/Boltzmann_machine”>Boltzmann Machine. [Over my head.]

Their researc showed that playing puzzles with a growth-mindset robot fosters that mindset in children. For example, the children tried harder over time.

They also studied early literacy education using personalized robot tutors. In multiple studies of about 120 children. The robot, among other things, encourages the child to tell stories. Over four weeks, they found children more effectively learn vocabulary, and when the robot provided more expressive story telling (rather than speaking in an affect-less TTY voice) the children retained more and would mimic that expressiveness.

Now they’re studying fully automonmous storytelling robots. The robot uses the child’s responses to further engage the child. The children respond more, tell longer stories, and stayed engaged over longer periods across sessions.

We are headed toward a time when robots are more human-centered rather than task focused. So we need to think about making AI not just human-like but humanistic. We hope to make AI that make us better people.

[liveblog][PAIR] Karrie Karahalios

At the Google PAIR conference, Karrie Karahalios is going to talk about how people make sense of their world and lives online. (This is an information-rich talk, and Karrie talks quickly, so this post is extra special unreliable. Sorry. But she’s great. Google her work.)

|

NOTE: Live-blogging. Getting things wrong. Missing points. Omitting key information. Introducing artificial choppiness. Over-emphasizing small matters. Paraphrasing badly. Not running a spellpchecker. Mangling other people’s ideas and words. You are warned, people. |

Today, she says, people want to understand how the information they see comes to them. Why does it vary? “Why do you get different answers depending on your wifi network? ”Why do you get different answers depending on your wifi network? These algorithms also affect our personal feeds, e.g., Instagram and Twitter; Twitter articulates it, but doesn’t tell you how it decides what you will see

In 2012, Christian Sandvig and [missed first name] Holbrook were wondering why they were getting odd personalized ads in their feeds. Most people were unaware that their feeds are curated: only 38% were aware of this in 2012. Thsoe who were aware became aware through “folk theories”: non-authoritative explanations that let them make sense of their feed. Four theories:

1. Personal engagement theory: If you like and click on someone, the more of that person you’ll see in your feed. Some people were liking their friends’ baby photos, but got tired of it.

2. Global population theory: If lots of people like, it will show up on more people’s feeds.

3. Narcissist: You’ll see more from people who are like you.

4. Format theory: Some types of things get shared more, e.g., photos or movies. But people didn’t get

Kempton studied thermostats in the 1980s. People either thought of it as a switch or feedback, or as a valve. He looked at their usage patterns. Regardless of which theory, they made it work for them.

She shows an Orbitz page that spits out flights. You see nothing under the hood. But someone found out that if you use a Mac, your prices were higher. People started using designs that shows the seams. So, Karrie’s group created a view that showed the feed and all the content from their network, which was three times bigger than what they saw. For many, this was like awakening from the Matrix. More important, they realized that their friends weren’t “liking” or commenting because the algorithm had kept their friends from seeing what they posted.

Another tool shows who you are seeing posts from and who you are not. This was upsetting for many people.

After going through this process people came up with new folk theories. E.g., they thought it must be FB’s wisdom in stripping out material that’s uninteresting one way or another. [paraphrasing].

They let them configure who they saw, which led many people to say that FB’s algorithm is actually pretty good; there was little to change.

Are these folk theories useful? Only two: personal engagement and control panel, because these let you do something. But there are poor tweaking tools.

How to embrace folk theories: 1. Algorithm probes, to poke and prod. “It would be great, Karrie says, to have open APIs so people could create tools”(It would be great to have open APIs so people could create tools. FB deprecated it.) 2. Seamful interfaces to geneate actionable folk theories. Tuning to revert of borrow?

Another control panel UI, built by Eric Gilbert, uses design to expose the algorithms.

She ends with a wuote form Richard Dyer: “All technolgoies are at once technical and also always social…”

[liveblog][PAIR] Jess Holbrook

I’m at the PAIR conference at Google. Jess Holbrook is UX lead for AI. He’s talking about human-centered machine learning.

|

NOTE: Live-blogging. Getting things wrong. Missing points. Omitting key information. Introducing artificial choppiness. Over-emphasizing small matters. Paraphrasing badly. Not running a spellpchecker. Mangling other people’s ideas and words. You are warned, people. |

“We want to put AI into the maker toolkit, to help you solve real problems.” One of the goals of this: “How do we democratize AI and change what it means to be an expert in this space?” He refers to a blog post he did with Josh Lovejoy about human-centered ML. He emphasizes that we are right at the beginning of figuring this stuff out.

Today, someone finds a data set, and finds a problem that that set could solve. You train a model and look at its performance, and decided if it’s good enough. And then you launch “The world’s first smart X. Next step: profit.” But what if you could do this in a human-centered way?

Human-centered design means: 1. Staying proximate. Know your users. 2. Inclusive divergence: reach out and bring in the right people. 3. Shared definition of success: what does it mean to be done? 4. Make early and often: lots of prototyping. 5. Iterate, test, throw it away.

So, what would a human-centered approach to ML look like? He gives some examples.

Instead of trying to find an application for data, human-centered ML finds a problem and then finds a data set appropriate for that problem. E.g., diagnosis plant diseases. Assemble tagged photos of plants. Or, use ML to personalize a “balancing spoon” for people with Parkinsons.

Today, we find bias in data sets after a problem is discoered. E.g., ProPublica’s article exposing the bias in ML recidivism predictions. Instead, proactively inspect for bias, as per JG’s prior talk.

Today, models personalize experiences, e.g., keyboards that adapt to you. With human-centered ML, people can personalize their models. E.g., someone here created a raccoon detector that uses images he himself took and uploaded, personalized to his particular pet raccoon.

Today, we have to centralize data to get results. “With human-centered ML we’d also have decentralized, federated learning”With human-centered ML we’d also have decentralized, federated learning, getting the benefits while maintaining privacy.

Today there’s a small group of ML experts. [The photo he shows are all white men, pointedly.] With human-centered ML, you get experts who have non-ML domain expertise, which leads to more makers. You can create more diverse, inclusive data sets.

Today, we have narrow training and testing. With human-centered ML, we’ll judge instead by how systems change people’s lives. E.g., ML for the blind to help them recognize things in their environment. Or real-time translation of signs.

Today, we do ML once. E.g., PicDescBot tweets out amusing misfires of image recognition. With human-centered ML we’ll combine ML and teaching. E.g., a human draws an example, and the neural net generates alternatives. In another example, ML improved on landscapes taken by StreetView, where it learned what is an improvement from a data set of professional photos. Google auto-suggest ML also learns from human input. He also shows a video of Simone Giertz, “Queen of the Shitty Robots.”

He references Amanda Case: “Expanding people’s definion of normal” is almost always a gradual process.

[The photo of his team is awesomely diverse.]

[liveblog] Google AI Conference

I am, surprisingly, at the first PAIR (People + AI Research) conference at Google, in Cambridge. There are about 100 people here, maybe half from Google. The official topic is: “How do humans and AI work together? How can AI benefit everyone?” I’ve already had three eye-opening conversations and the conference hasn’t even begun yet. (The conference seems admirably gender-balanced in audience and speakers.)

|

NOTE: Live-blogging. Getting things wrong. Missing points. Omitting key information. Introducing artificial choppiness. Over-emphasizing small matters. Paraphrasing badly. Not running a spellpchecker. Mangling other people’s ideas and words. You are warned, people. |

The great Martin Wattenberg (half of Wattenberg – Fernanda Viéga) kicks it off, introducing John Giannandrea, a VP at Google in charge of AI, search, and more. “We’ve been putting a lot of effort into using inclusive data sets.”

John says that every vertical will affected by this. “It’s important to get the humanistic side of this right.” He says there are 1,300 languages spoken world wide, so if you want to reach everyone with tech, machine learning can help. Likewise with health care, e.g. diagnosing retinal problems caused by diabetes. Likewise with social media.

PAIR intends to use engineering and analysis to augment expert intelligence, i.e., professionals in their jobs, creative people, etc. And “how do we remain inclusive? How do we make sure this tech is available to everyone and isn’t used just by an elite?”

He’s going to talk about interpretability, controllability, and accessibility.

Interpretability. Google has replaced all of its language translation software with neural network-based AI. He shows an example of Hemingway translated into Japanese and then back into English. It’s excellent but still partially wrong. A visualization tool shows a cluster of three strings in three languages, showing that the system has clustered them together because they are translations of the same sentence. [I hope I’m getting this right.] Another example: a photo of integrated gradients hows that the system has identified a photo as a fire boat because of the streams of water coming from it. “We’re just getting started on this.” “We need to invest in tools to understand the models.”

Controllability. These systems learn from labeled data provided by humans. “We’ve been putting a lot of effort into using inclusive data sets.” He shows a tool that lets you visuallly inspect the data to see the facets present in them. He shows another example of identifying differences to build more robust models. “We had people worldwide draw sketches. E.g., draw a sketch of a chair.” In different cultures people draw different stick-figures of a chair. [See Eleanor Rosch on prototypes.] And you can build constraints into models, e.g., male and female. [I didn’t get this.]

Accessibility. Internal research from Youtube built a model for recommending videos. Initially it just looked at how many users watched it. You get better results if you look not just at the clicks but the lifetime usage by users. [Again, I didn’t get that accurately.]

Google open-sourced Tensor Flow, Google’s AI tool. “People have been using it from everything to to sort cucumbers, or to track the husbandry of cows.”People have been using it from everything to to sort cucumbers, or to track the husbandry of cows. Google would never have thought of this applications.

AutoML: learning to learn. Can we figure out how to enable ML to learn automatically. In one case, it looks at models to see if it can create more efficient ones. Google’s AIY lets DIY-ers build AI in a cardboard box, using Raspberry Pi. John also points to an Android app that composes music. Also, Google has worked with Geena Davis to create sw that can identify male and female characters in movies and track how long each speaks. It discovered that movies that have a strong female lead or co-lead do better financially.

He ends by emphasizing Google’s commitment to open sourcing its tools and research.

Fernanda and Martin talk about the importance of visualization. (If you are not familiar with their work, you are leading deprived lives.) When F&M got interested in ML, they talked with engineers. ““ML is very different. Maybe not as different as software is from hardware. But maybe. ”ML is very different. Maybe not as different as software is from hardware. But maybe. We’re just finding out.”

M&F also talked with artists at Google. He shows photos of imaginary people by Mike Tyka created by ML.

This tells us that AI is also about optimizing subjective factors. ML for everyone: Engineers, experts, lay users.

Fernanda says ML spreads across all of Google, and even across Alphabet. What does PAIR do? It publishes. It’s interdisciplinary. It does education. E.g., TensorFlow Playground: a visualization of a simple neural net used as an intro to ML. They opened sourced it, and the Net has taken it up. Also, a journal called Distill.pub aimed at explaining ML and visualization.

She “shamelessly” plugs deeplearn.js, tools for bringing AI to the browser. “Can we turn ML development into a fluid experience, available to everyone?”

What experiences might this unleash, she asks.

They are giving out faculty grants. And expanding the Brain residency for people interested in HCI and design…even in Cambridge (!).

June 6, 2017

[liveblog] metaLab

Harvard metaLab is giving an informal Berkman Klein talk about their work on designing for ethical AI. Jeffrey Schnapp introduces metaLab as “an idea foundry, a knowledge-design lab, and a production studio experimenting in the networked arts and humanities.” The discussion today will be about metaLab’s various involvements in the Berkman Klein – MIT MediaLab project on ethics and governance of AI. The conference is packed with Fellows and the newly-arrived summer interns.

|

NOTE: Live-blogging. Getting things wrong. Missing points. Omitting key information. Introducing artificial choppiness. Over-emphasizing small matters. Paraphrasing badly. Not running a spellpchecker. Mangling other people’s ideas and words. You are warned, people. |

Matthew Battles and Jessica Yurkofsky begin by talking about Curricle, a “new platform for experimenting with shopping for courses.” How can the experience be richer, more visual, and use more of the information and data that Harvard has? They’ve come up with a UI that has three elements: traditional search, a visualization, and a list of the results.

“They’ve been grappling with the ethics of putting forward new search algorithms. ”They’ve been grappling with the ethics of putting forward new search algorithms. The design is guided by transparency, autonomy, and visualization. Transparency means that they make apparent how the search works, allowing students to assign weights to keywords. If Curricle makes recommendations, it will explain that it’s because other students like you have chosen it or because students like you have never done this, etc. Visualization shows students what’s being returned by their search and how it’s distributed.

Similar principles guide a new project, AI Compass, that is the entry point for information about Berkman Klein’s work on the Ethics and Governance of AI project. It is designed to document the research being done and to provide a tool for surveying the field more broadly. They looked at how neural nets are visualized, how training sets are presented, and other visual metaphors. They are trying to find a way to present these resources in their connections. They have decided to use Conway’s Game of Life [which I was writing about an hour ago, which freaks me out a bit]. The game allows complex structures to emerge from simple rules. AI Compass is using animated cellular automata as icons on the site.

metaLab wants to enable people to explore the information at three different scales. The macro scale shows all of the content arranged into thematic areas. This lets you see connections among the pieces. The middle scale shows the content with more information. At the lowest scale, you see the resource information itself, as well as connections to related content.

Sarah Newman talks about how AI is viewed in popular culture: the Matrix, Ahnuld, etc. “We generally don’t think about AI as it’s expressed in the tools we actually use”We generally don’t think about AI as it’s expressed in the tools we actually use, such as face recognition, search, recommendations, etc. metaLab is interested in how art can draw out the social and cultural dimensions of AI. “What can we learn about ourselves by how we interact with, tell stories about, and project logic, intelligence, and sentience onto machines?” The aim is to “provoke meaningful reflection.”

One project is called “The Future of Secrets.” Where our email and texts be in 100 years? And what does this tell us about our relationship with our tech. Why and how do we trust them? It’s an installation that’s been at the Museum of Fine Arts in Boston and recently in Berlin. People enter secrets that are printed out anonymously. People created stories, most of which weren’t true, often about the logic of the machine. People tended to project much more intelligence on the machine than was there. Cameras were watching and would occasionally print out images from the show itself.

From this came a new piece (done with fellow Rachel Kalmar) in which a computer reads the secrets out loud. It will be installed at the Berkman Klein Center soon.

Working with Kim Albrecht in Berlin, the center is creating data visualizations based on the data that a mobile phone collects, including the accelerometer. “These visualizations let us see how the device is constructing an image of the world we’re moving through”These visualizations let us see how the device is constructing an image of the world we’re moving through. That image is messy, noisy.

The lab is also collaborating on a Berlin exhibition, adding provocative framing using X degrees of Separation. It finds relationships among objects from disparate cultures. What relationships do algorithms find? How does that compare with how humans do it? What can we learn?

Starting in the fall, Jeffrey and a co-teacher are going to be leading a robotics design studio, experimenting with interior and exterior architecture in which robotic agents are copresent with human actors. This is already happening, raising regulatory and urban planning challenges. The studio will also take seriously machine vision as a way of generating new ways of thinking about mobility within city spaces.

Q&A

Q: me: For AI Compass, where’s the info coming from? How is the data represented? Open API?

Matthew: It’s designed to focus on particular topics. E.g., Youth, Governance, Art. Each has a curator. The goal is not to map the entire space. It will be a growing resource. An open API is not yet on the radar, but it wouldn’t be difficult to do.

Q: At the AI Advance, Jonathan Zittrain said that organizations are a type of AI: governed by a set of rules, they grow and learn beyond their individuals, etc.

Matthew: We hope to deal with this very capacious approach to AI is through artists. What have artists done that bear on AI beyond the cinematic tropes? There’s a rich discourse about this. We want to be in dialogue with all sorts of people about this.

Q: About Curricle: Are you integrating Q results [student responses to classes], etc.?

Sarah: Not yet. There’s mixed feeling from administrators about using that data. We want Curricle to encourage people to take new paths. The Q data tends to encourage people down old paths. Curricle will let students annotate their own paths and share it.

Jeffrey: We’re aiming at creating a curiosity engine. We’re working with a century of curricular data. This is a rare privilege.

me: It’d enrich the library if the data about resources was hooked into LibraryCloud.

Q: kendra: A useful feature would be finding a random course that fits into your schedule.

A: In the works.

Q: It’d be great to have transparency around the suggestions of unexpected courses. We don’t want people to be choosing courses simply to be unique.

A: Good point.

A: The same tool that lets you diversify your courses also lets you concentrate all of them into two days in classrooms near your dorm. Because the data includes courses from all the faculty, being unique is actually easy. The challenge is suggesting uniqueness that means something.

Q: People choose courses in part based on who else is choosing that course. It’d be great to have friends in the platform.

A: Great idea.

Q: How do you educate the people using the platform? How do you present and explain the options? How are you going to work with advisors?

A: Important concerns at the core of what we’re thinking about and working on.